To honour September 2021 as the ‘Animal Pain Awareness Month’, we revisit the role of computing and machine learning in recognising animal pain in this blog. The usefulness of machine learning (ML) is well versed in animal medicine and animal behavioural science across many settings. Indeed ML as a method is extensively applied in pets, wild animals and especially in animal farming for early detection of diseases for more objective and practical detection measures. Yet, detecting pain in animals remains a challenging task.

Today it is well understood that animals can experience pain, distress and suffering, even though they cannot communicate the same way as with humans (Sneddon et al., 2014). By nature, many species rarely show clear signs of pain since hiding signs of pain is a survival strategy. Different species exhibit different variability in pain symptom development, and pain is a sensory and emotional issue in animals. In trn, this variability and reluctance in pain symptoms have often led to the public perception that many animals are not particularly sensitive to pain. The grand challenge in machine learning applications is hence to finding objective means of detecting pain in animals, not only from the perspective of methods used but also data collected and the contextual meaning of data. We also expect that designing an ML-based system for animal pain detection needs to be reliable, objective, accurate, efficient, automatic and non-intrusive, setting the expectations as high as we see in human medicine today. Finally, since pain is a real-time perception, real-time implementation of the ML system for animal pain detection can be critical.

The current literature related to ML-assisted animal pain detection falls under three basic categories:

- Detection of animal posture and behaviour that can be associated with the existence of pain.

- Analysis of facial expressions.

- Analysis of various vocalisations.

Typical ML-based systems for the recognition of animals postures are either with image recognition or wearable sensors. These methods are considered suitable for automatically analysing animals’ behavioural patterns, in contrast to today’s standard and laborious practice of manual analyses of lengthy video material and large data sets. For example, Rajs and Jayanthi (2018) framework can classify sick and healthy hens by using K-mean clustering (for image processing) and K-Nearest Neighbor (KNN) (for audio processing), by combining image analysis (for motion patterns) and thermal sensor analysis (for temperature patterns). In another example, Banerjee et al. (2012) presented a design and implementation of a wearable body-mounted sensor system capable of detecting the behaviour of laying hens for remote monitoring applications in non-cage housing systems. Using ML, we can see the hens’ activities with reasonably high accuracy by using data collected from accelerometers.

Looking at pre-weaned calves, Katamreddy et al. (2017) reviewed the usage of ML for the classification of their suckling behaviour during the weaning process. They found that using ML classifications can be done by observing the calf head and neck movement patterns and discuss the integration of relevant sensors (sensor fusion) and deep learning to improve the prediction accuracy.

In another ML method, using audio processing techniques, Bishop-Hurley et al. (2014) proposed a system automatically to distinguish between healthy and sick chickens using k-means algorithm. Yet another group of ML-based studies consider analysing the movement in groups of animals in farms, reaching conclusions about their health. Katamereddy et al. (2017) and Allen et al. (2014) presented statistical results of the correlation between the animal behaviours and different parameters such as temperature, humidity and movement. Building on this, Dutta (2014) presented supervised machine learning techniques to classify cattle behavioural patterns, such as grazing, ruminating, resting, walking, and scratching. Likewise, Shrestha (2018) studied the identification of lameness problem for farmed animals: dairy cows, sheep, and horses, using support vector machine (SVM) and KNN as well as radar micro-Doppler signatures as metrics. As these examples demonstrate, many different ML applications have been developed across multiple animal species.

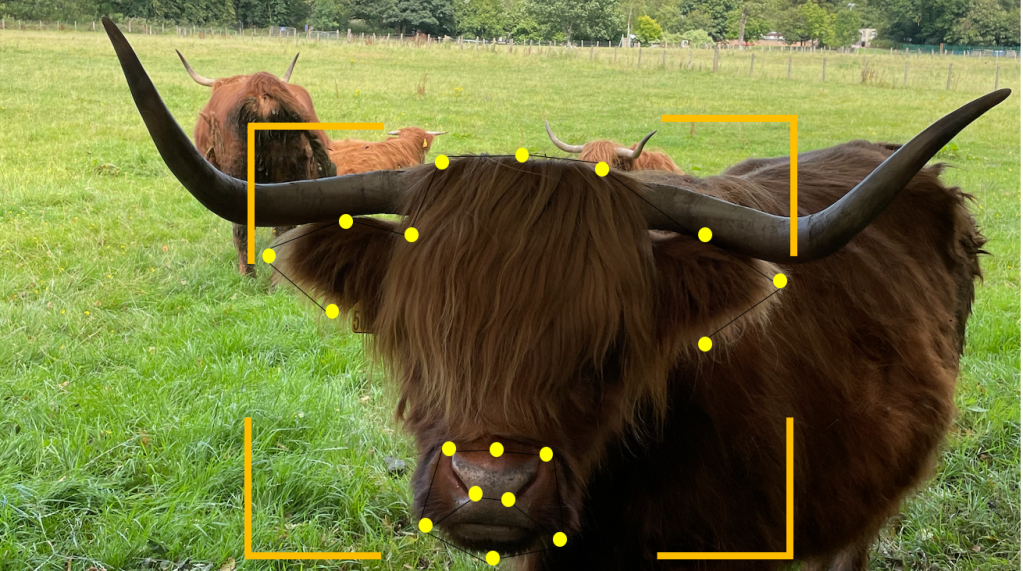

Recently, researchers started to apply machine learning to study animal pain from different perspectives: estimating pain from facial actions, analysing animals voices to detecting pain, and analysing animal behaviour to detecting anomalies related to pain (Tuttle et al., 2018, Dalla Costa et al., 2018). For instance, Byabazair et al. (2019) use a pedometer for data acquisition, where the time series of behaviour are analysed, and an alarm is given when lameness is detected. Moreover, they propose an end-to-End IoT based application in an IoT-fog-cloud setting, using this architecture to consider the cattle behaviour variables, including step count, lying time, and swaps count. Their paper showed that with the help of machine learning, lameness in cows could be detected up to three days before it would have been with visual recognition with an accuracy of 87%. Using interconnected fog to cloud computing systems, the paper demonstrated that the amount of data exchanged over the network could be reduced from 10.1MB to 1.61MB daily.

Similarly, Reulke et al. (2018) used a non-intrusive method with fifteen horses to determine their pain level while undergoing castration surgery by their motion patterns via object detection. Using a support vector machine, they concluded that less movement indicated higher pain levels in horses, with an accuracy of 88%. In sheep, Lu et al. (2017) used extended techniques for humans face expression recognition to proposed various so-called pain facial expression scales. Again, using support vector machine, it was demonstrated that high accuracy in pain detection could be achieved for high levels of pain in sheep. It is also worth mentioning that scientists have also studied vocalisations to determine the level of pain in pigs. For example, Cordeiro et al. (2018) examine the variables examined in a pigs audio sampled data split via the energy, sound duration, maximum amplitude, intensity, and pitch. In this work, they found that pitch frequency, the maximum amplitude and signal intensity of the pig vocal increase as the pain level increases.

As it is well-recognised in human medicine, AI should be used rigorously or otherwise can have several drawbacks. For instance, Yogesh (2018) presents different problems related to the trustability of AI results, for example, the identity recognition problem in which Convolutional Neural Networks (CNN) are used to identify facial features. CNN’s still have precision problems in spatial relationships, which could cause erroneous results. Mislabeling is another frequent problem encountered by ML models – a problem hugely relevant to a veterinarian who relies on manually collected data that need to be labelled appropriately. As such, misclassification can have a direct impact on farm animal welfare in AI-powered systems.

To summarise, most research on AI-assisted pain detection shows that current methods exhibit relatively low accuracy and require expert-based time computing systems leading to problems in humans adopting such strategies. Further, most ML systems have no contextual data that considers other factors, such as the animals’ environment. What is missing in future ML systems are high-quality data sets, data visualisation tools and also effective deployment of coordinated IoT, edge and cloud computing systems. Much work is left to be done to relate the disease indicators to pain in general and in real-time in particular. We hope that this blog has highlighted areas for future researchers and that in time, we as computer scientists can get closer to preventing and recognising pain in animals.